Governed AI is artificial intelligence that operates within defined rules for data handling, access control, audit logging, instruction boundaries, and compliance documentation. Unlike ungoverned AI tools where businesses have no visibility into how data is processed or what the AI says, governed AI gives organizations control, accountability, and proof. Every business handling client data needs governed AI because the alternative is trusting a black box with your clients’ most sensitive information.

What Is Governed AI?

Governed AI is an AI system that operates within explicit, enforceable rules for how it handles data, who can access it, what it’s allowed to say, and how every interaction is recorded. It’s the difference between handing a new employee a blank check and hiring someone with clear job responsibilities, documented processes, and accountability.

In practice, governed AI means five things. Your data doesn’t leave your control. Every AI interaction creates a verifiable record. Access is restricted to the right people. The AI follows instructions you’ve defined and can’t be manipulated into breaking them. And you can produce documentation that demonstrates all of this to auditors, regulators, or clients who ask.

This isn’t a theoretical concept. It’s a practical requirement for any business that handles other people’s information, whether that’s financial records, health data, legal documents, or simply client communications that are expected to stay confidential.

What Are the 5 Pillars of AI Governance for Business?

The five pillars of AI governance for business are: data controls, audit trails, access management, instruction guardrails, and compliance documentation. Each pillar addresses a specific risk that ungoverned AI creates.

Pillar 1: Data Controls

Data controls determine what happens to the information you put into an AI system. This covers:

- Data isolation. Your information is not mixed with other users’ data or used to train the AI model.

- Data residency. You know where your data is physically stored and can verify it meets jurisdictional requirements.

- Data retention. Clear policies on how long data is kept and how it’s deleted when no longer needed.

- Data encryption. Information is encrypted both in transit and at rest.

Without data controls, your client’s financial statements, personal details, or proprietary business information could end up anywhere. On a governed platform like LaunchLemonade, data controls are built into the infrastructure, not bolted on as an afterthought.

Pillar 2: Audit Trails

An audit trail is a timestamped, immutable record of every interaction between a user, the AI, and the data it accesses. A proper audit trail captures:

- What question or instruction was given

- What data the AI accessed to formulate its response

- What the AI said in return

- Which AI model was used

- Who initiated the interaction

- The exact time of every step

Think of it as a receipt for every AI conversation. If something goes wrong (a client receives incorrect information, a compliance question arises, a dispute occurs) you can trace exactly what happened, when, and why.

Pillar 3: Access Management

Access management controls who can use each AI assistant, what data they can access, and what actions they can perform. In a business context, this means:

- Role-based access. Your junior associate doesn’t need access to the same AI tools and client data as a senior partner.

- Client isolation. If you serve multiple clients, their data and AI interactions stay completely separate.

- Permission levels. Some users can build and modify assistants, others can only use them.

This pillar is especially important for multi-client businesses like consulting firms, accounting practices, and financial advisory firms where data from one client must never leak into another client’s context.

Pillar 4: Instruction Guardrails

Instruction guardrails are the rules you set for what the AI can and cannot do. This goes beyond simple content filtering. It includes:

- Topic boundaries. The AI stays within its defined scope and redirects questions it shouldn’t answer.

- Tone and language rules. The AI communicates in ways that match your professional standards.

- Escalation triggers. Certain questions or topics automatically route to a human.

- Factual grounding. The AI only references information from your approved knowledge base, not general internet information that may be inaccurate.

Without guardrails, an AI assistant might provide investment advice when it should only be summarizing data. Or share confidential client information in response to a cleverly worded question. Governed platforms enforce these boundaries at the system level.

Pillar 5: Compliance Documentation

Compliance documentation is the ability to produce evidence that your AI system meets regulatory requirements, industry standards, and client agreements. This includes:

- Processing records showing how data moves through the system.

- Policy documentation describing your AI governance framework.

- Incident logs if anything goes wrong.

- Audit reports that can be shared with regulators or clients.

For businesses in regulated industries, this pillar turns AI from a compliance risk into a compliance asset. You’re not just using AI responsibly. You can prove it.

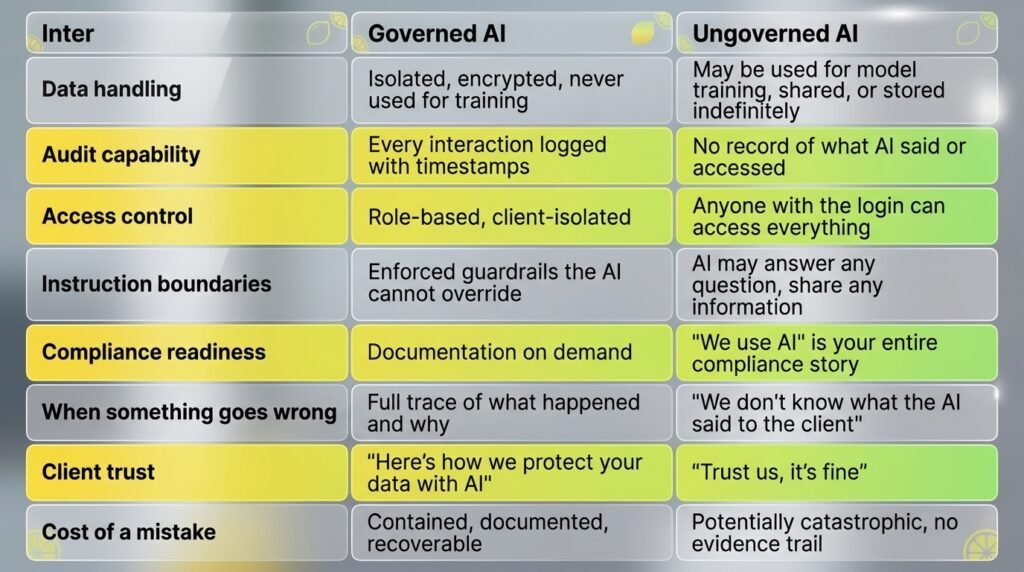

How Does Governed AI Compare to Ungoverned AI?

The core difference is accountability. Governed AI gives you visibility and control over every aspect of how AI interacts with your data and clients. Ungoverned AI is a black box where you hope for the best.

The difference becomes starkly clear in three scenarios.

Scenario 1: A client asks how you use AI. With governed AI, you show them your governance framework, audit trail capabilities, and data handling policies. Without it, you give a vague answer and hope they don’t press further.

Scenario 2: An AI gives a client incorrect information. With governed AI, you pull the audit log, see exactly what happened, correct the issue, and document the resolution. Without it, you have no idea what the AI said, when, or why.

Scenario 3: A regulator or auditor asks about your AI practices. With governed AI, you produce compliance documentation within minutes. Without it, you spend weeks trying to reconstruct what systems you used and how.

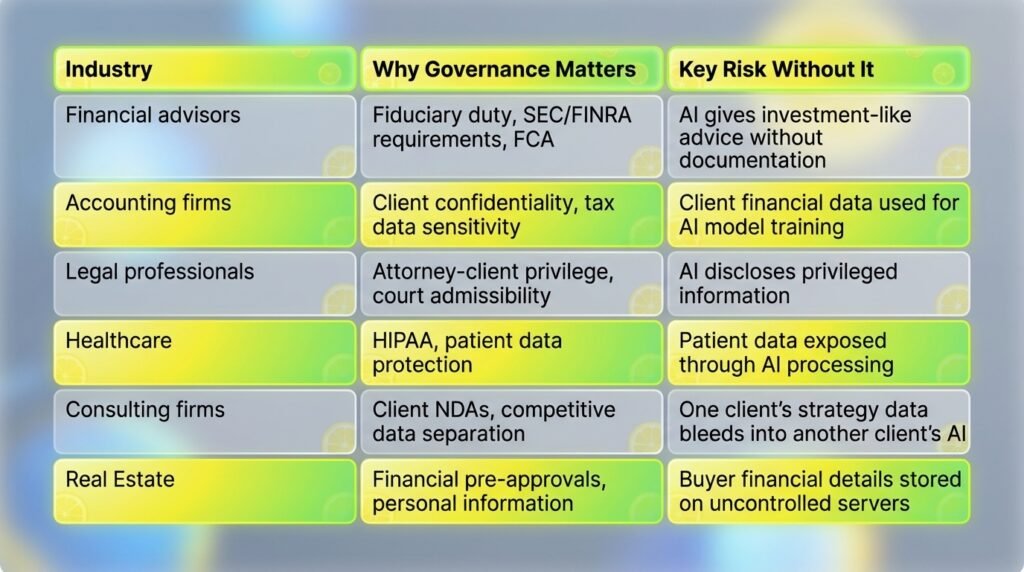

Which Industries Need Governed AI Most?

Any industry that handles client data, faces regulatory requirements, or operates under professional liability standards needs governed AI. This includes financial services, accounting, legal, healthcare, consulting, and real estate.

Here’s why specific industries can’t afford ungoverned AI:

The common thread is trust. These businesses exist because clients trust them with sensitive information. Using ungoverned AI with that information is a breach of that trust, even if nothing bad happens yet.

How Do You Evaluate Whether an AI Platform Is Governed?

Ask these 10 questions to determine whether an AI platform meets governance standards. If the vendor can’t answer any of them clearly, that’s a red flag.

- Is my data used to train or improve your AI models?

- Where is my data physically stored?

- Can you provide a complete audit trail of every AI interaction?

- How is data isolated between different clients or projects?

- What access controls are available (role-based, client-based)?

- Can I set instruction guardrails that the AI cannot override?

- What happens to my data if I cancel my account?

- Do you have SOC2, ISO 27001, or equivalent security certification?

- Can you provide compliance documentation for regulatory inquiries?

- How do you handle data breaches or AI-generated errors?

Platforms built for governance, like LaunchLemonade, can answer all 10 clearly because these features are part of the product architecture. They’re not add-ons. They’re the foundation.

What Does AI Governance Cost?

Governed AI platforms for businesses typically cost $20-$25 per month, which is negligible compared to the cost of a data breach ($4.45 million average), regulatory fine, or client lawsuit. The investment isn’t in the software. It’s in the protection.

To put the numbers in perspective:

- Average cost of a data breach (2024): $4.45 million globally, $9.48 million in the US.

- Cost of governed AI platform: $25-$20/seat/month ($300-$900/year).

- Cost of losing one major client due to a data handling incident: Varies, but the reputational damage often exceeds the direct financial loss.

For small and mid-sized businesses, governed AI isn’t an enterprise luxury. It’s basic risk management. Platforms like LaunchLemonade offer governance features starting at $25/month, making this accessible to solo practitioners and small firms, not just large corporations.

How Is Governed AI Different from AI Compliance?

Governed AI is the system of controls and processes you put in place. AI compliance is the act of meeting specific regulatory or industry requirements. Governance enables compliance, but they’re not the same thing.

Think of it this way. Governance is building a house with proper electrical wiring, fire exits, and structural integrity. Compliance is passing the building inspection. You need the first to achieve the second, but having good governance gives you flexibility to meet multiple compliance requirements as they evolve.

This distinction matters because AI regulations are still developing. The EU AI Act, proposed US state-level AI legislation, and industry-specific guidance are all in various stages. Businesses with governed AI platforms can adapt to new requirements by adjusting their governance framework. Businesses using ungoverned AI will need to rip and replace every time a new regulation arrives.

How Do You Start Implementing AI Governance?

Start with your highest-risk use case (typically anything involving client data), choose a governed platform, and expand from there. You don’t need a 50-page AI governance strategy to begin.

Step 1: Identify where you’re currently using AI (or want to) with client data. This is your governance priority.

Step 2: Evaluate platforms against the 10 questions above. Choose one that’s governed by design.

Step 3: Set up your first governed AI assistant with proper data controls, access restrictions, and instruction guardrails. On a no-code platform like LaunchLemonade, this takes under 15 minutes.

Step 4: Document your governance framework. What data goes in, how it’s protected, who has access, and how interactions are logged.

Step 5: Review and refine. Check audit trails monthly. Update guardrails as your use cases evolve. Add new team members with appropriate access levels.

The businesses that get governance right don’t treat it as a burden. They treat it as a competitive advantage. When you can tell clients “our AI is governed, auditable, and compliant,” that’s a trust signal your competitors using free AI tools can’t match.

Frequently Asked Questions

What is governed AI in simple terms?

Governed AI is an AI system that operates within defined rules for data protection, access control, audit logging, and compliance. It gives businesses visibility and control over how AI handles their data and interacts with their clients, instead of relying on a black box with no accountability.

Do small businesses need AI governance?

Yes, if they handle client data. A solo financial advisor using AI with client information has the same governance needs as a large firm. The difference is scale, not obligation. Governed platforms like LaunchLemonade make governance accessible at $25/month, so small businesses don’t need enterprise budgets to protect client data.

What happens if you use AI without governance?

Without governance, you have no record of what AI said to clients, no control over how data is used, no way to demonstrate compliance, and no ability to investigate when something goes wrong. In regulated industries, this creates legal liability. In any client-facing business, it’s a trust risk. A single incident can cost more than years of platform fees.

How is governed AI different from enterprise AI?

Enterprise AI is a pricing tier. Governed AI is an architecture principle. Governed AI platforms provide data controls, audit trails, and access management at every pricing level, not just for large organizations. LaunchLemonade offers governance features starting at $25/month for individual users.

Is AI governance required by law?

It depends on your industry and jurisdiction. The EU AI Act, GDPR, HIPAA, and various financial regulations all have implications for how AI handles data. Even where specific AI governance laws don’t yet exist, professional liability standards and client confidentiality agreements typically require the same protections that governed AI provides. It’s better to implement governance now than to scramble when regulations catch up.

Ready to use AI with the governance your clients expect? Build your first governed assistant on LaunchLemonade in under 15 minutes.