Not all AI platforms handle client data safely. The right secure AI platform includes end-to-end encryption, per-client data isolation, audit trails, role-based access controls, and a guarantee that your data never trains the AI model. Here’s an evaluation framework with the specific questions to ask vendors.

Why Does AI Security Matter for Client Data?

AI security matters for client data because every interaction with an AI agent involves sending sensitive information to a software system – and if that system isn’t built for security, your client data is at risk.

When you paste a client’s financial statement into ChatGPT, that data enters OpenAI’s systems. It may be stored, reviewed by staff, or used to improve the model. For a business owner sharing their own data, that’s a personal choice. For a professional handling client data, it’s a potential breach of fiduciary duty.

The security question isn’t “is AI safe?” – it’s “is THIS AI platform safe for THIS type of data?”

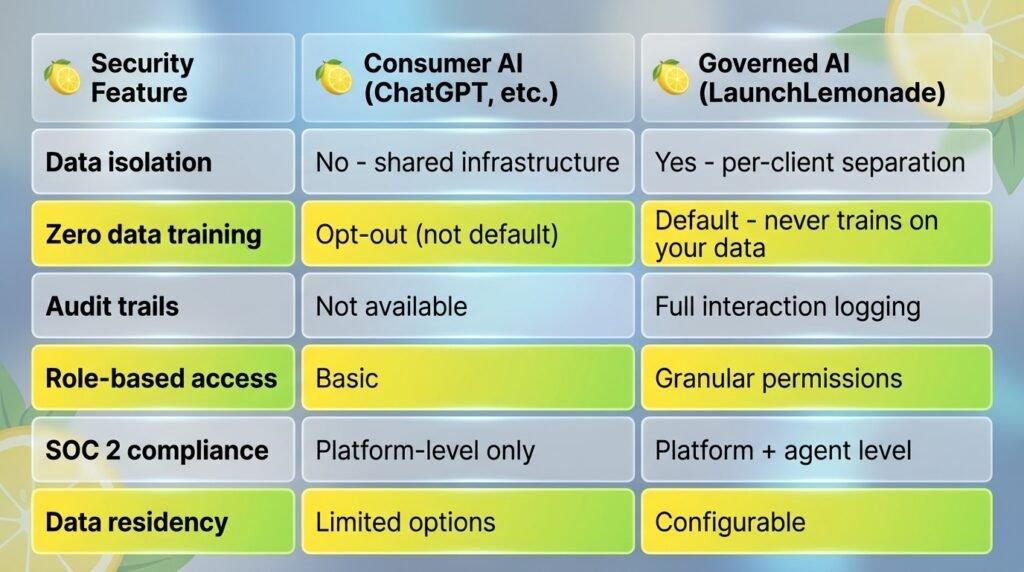

Consumer AI tools are designed for general use. They optimize for capability and convenience, not for the security requirements of professional services. Enterprise and governed AI platforms like LaunchLemonade are built specifically for businesses that handle sensitive data.

Client-Safe AI Platform Have?

A secure AI platform for client data should include these seven features at minimum:

1. Data isolation. Each client’s data stays separate from every other client’s data. No cross-contamination, no shared training pools.

2. Zero data training. The platform guarantees your client data never trains the underlying AI model. This is non-negotiable for fiduciary businesses.

3. Encryption at rest and in transit. All data encrypted using AES-256 at rest and TLS 1.3 in transit. No exceptions.

4. Audit trails. Every interaction logged – who asked what, when, and what the AI responded. Essential for compliance reviews.

5. Role-based access controls. Different team members get different permission levels. An intern shouldn’t have the same access as a partner.

6. SOC 2 compliance (or equivalent). The platform’s security practices have been independently audited. Ask for the report.

7. Data residency options. You can choose where client data is stored geographically. Important for firms with clients in multiple jurisdictions.

LaunchLemonade includes all seven features by default. No enterprise pricing required – governed AI starts at $25/month.

How Do You Evaluate AI Platform Security Before Buying?

Ask these seven questions before signing any AI vendor contract for client-facing work:

- ”Does my client data train your models?”

- The only acceptable answer is no. Not “you can opt out.” Not “we anonymize it.” No.

- ”Where is data stored and who can access it?”

- You need specific answers – data center locations, employee access policies, subprocessor lists.

- ”Can you provide your SOC 2 Type II report?”

- Type II is important – it covers operational effectiveness over time, not just a point-in-time snapshot.

- ”What happens to my data if I cancel?”

- Good answer: complete deletion within 30 days with certification.

- Bad answer: vague promises about data retention.

- ”Do you have audit trails I can export?”

- For regulated businesses, you need exportable logs for compliance reviews.

- Built-in logging isn’t enough if you can’t extract it.

- ”How do you handle data breaches?”

- Look for specific notification timelines, incident response procedures, and contractual commitments.

- ”Can different team members have different access levels?”

Role-based access isn’t optional when you have associates, partners, and support staff touching client data.

Red flags: Any vendor that can’t answer these questions clearly, deflects to “enterprise” pricing for security features, or requires you to read a 40-page privacy policy to find basic answers.

What Compliance Standards Should Your AI Platform Meet?

The compliance standards that matter depend on your industry:

Financial services: SOC 2 Type II, SEC recordkeeping requirements (if applicable), state-specific data protection laws, and fiduciary duty standards.

Accounting: SOC 2 Type II, IRS data security requirements, AICPA professional standards, and client confidentiality obligations.

Insurance: SOC 2 Type II, state insurance data security model laws, NAIC requirements, and policyholder data protection standards.

General professional services: SOC 2 Type II, state privacy laws (CCPA, etc.), industry-specific ethical obligations, and client engagement agreement terms.

The common thread: SOC 2 Type II is the minimum baseline. If an AI platform doesn’t have it, they’re not ready for professional services work.

FAQ

Q: Is ChatGPT safe to use with client data? A: Not in its default configuration. ChatGPT’s standard terms allow data to be used for model training. Even with the opt-out setting, there’s no data isolation between users, no audit trails, and no compliance certifications designed for regulated industries. For personal use, it’s fine. For client data, use a governed platform.

Q: What’s the difference between SOC 2 Type I and Type II? A: Type I certifies that security controls exist at a specific point in time. Type II certifies that those controls operate effectively over a period, usually 6 to 12 months. Type II provides stronger assurance because it proves consistent security practices, not just a one-time setup.