If your business operates under regulatory oversight—finance, healthcare, legal, insurance—you can’t use just any AI tool. Compliant AI platforms include audit trails, data encryption, access controls, and governance features that satisfy regulators. Here’s what to look for and what to avoid.

What Makes an AI Platform Compliant?

A compliant AI platform is one that meets the security, privacy, and documentation standards required by your industry’s regulators—and can prove it during an audit.

Compliance isn’t a single checkbox. It’s a combination of technical controls (encryption, access management, data isolation), operational controls (audit trails, logging, reporting), and governance controls (policies, boundaries, oversight mechanisms).

The three pillars of AI compliance:

- Data protection – Client data is encrypted, isolated, and never used to train the AI model

- Accountability – Every AI interaction is logged and auditable

- Control – The business defines what the AI can and cannot do, and those boundaries are enforced

Most consumer AI tools fail on all three. They don’t encrypt data per client, they don’t log interactions in a compliance-ready format, and they don’t let you set enforceable boundaries.

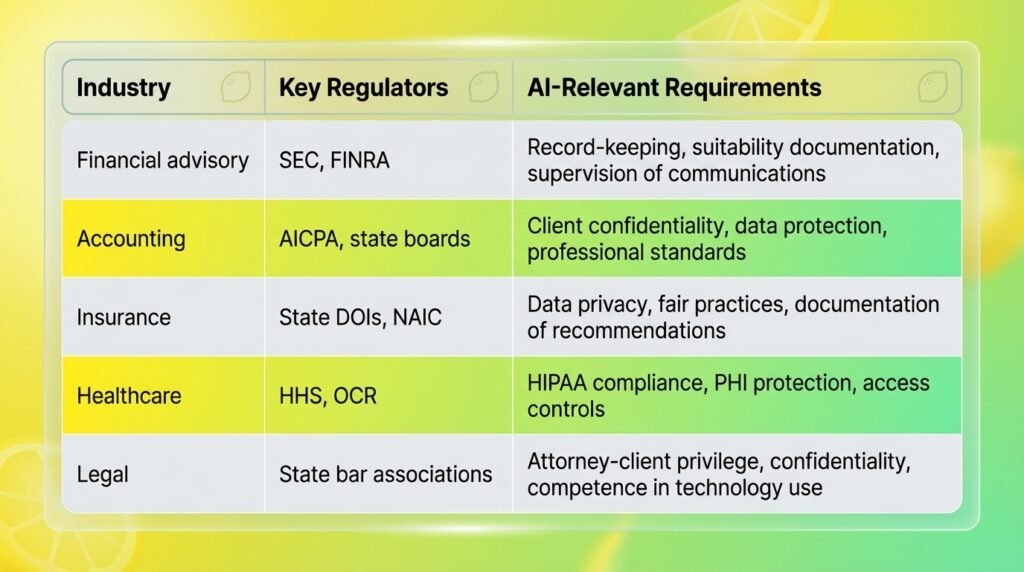

What Compliance Requirements Apply to AI in Regulated Industries?

Different industries face different regulatory requirements, but the core concerns overlap significantly:

The common thread: every regulated industry requires that you can document what happened, prove data was protected, and demonstrate that automated tools operated within defined boundaries.

An AI platform that doesn’t provide these capabilities is a compliance liability, regardless of how useful it is.

What Features Should You Look for in Compliant AI?

When evaluating AI platforms for regulated work, here are the non-negotiable features:

Audit Trails

Every interaction between users and AI agents must be logged. These logs should include who asked what, what the AI responded, which data sources were accessed, and timestamps. The logs must be exportable in formats your compliance team can review.

Data Encryption

Data must be encrypted both at rest (stored) and in transit (moving between systems). Look for AES-256 encryption or equivalent. Ask specifically whether the platform encrypts per-client data separately or uses shared encryption keys.

Access Controls

Role-based access that lets you define who can use which agents and see which data. Your compliance officer needs different access than your admin assistant. Granular controls prevent accidental data exposure.

Data Isolation

Client data must be logically separated so that Agent A working on Client X never has access to Client Y’s information. This is critical for multi-client businesses like accounting firms and advisory practices.

No Model Training on Your Data

The platform must guarantee that your client data is never used to train or improve its AI models. If your data feeds the model, it could surface in responses to other users. For regulated businesses, this is a non-starter.

LaunchLemonade includes all five of these features as core platform capabilities—not premium add-ons.

What Are the Biggest Compliance Risks of Using AI?

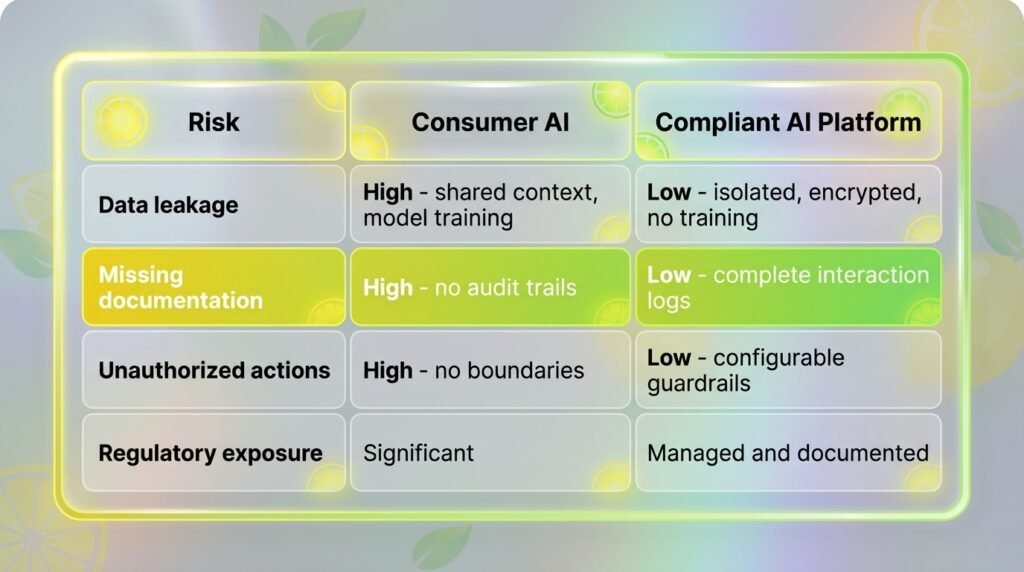

The biggest compliance risks fall into three categories:

Data leakage – Using AI tools that don’t isolate client data means information from one client could appear in responses about another. With consumer AI tools, there’s no guarantee that data you input stays private. Some tools explicitly state they use your inputs to improve their models.

Lack of documentation – Regulators require records. If your AI generates a client recommendation and there’s no log of that interaction, you can’t prove what was said or why. This is equivalent to having an unrecorded phone call with a client about their portfolio—except worse, because you didn’t even make the decision.

Unauthorized actions – AI agents without proper boundaries might generate content that violates regulatory guidelines. A financial AI without guardrails could suggest specific investment products without proper disclaimers, exposing the firm to suitability violations.

How Do You Evaluate an AI Vendor for Compliance?

When evaluating AI vendors, ask these seven questions:

- Where is my data stored, and who can access it? The answer should include specific data centers, encryption methods, and access control policies. Vague answers are red flags.

- Is my data used to train your AI models? The only acceptable answer for regulated businesses is “No, never.”

- Can I export complete audit logs? You need logs that show every interaction, every data access, and every AI response—in a format your compliance team can review.

- How do you handle data isolation between clients? Look for logical separation at minimum, with separate knowledge bases per client or engagement.

- What happens to my data if I cancel? Data should be completely deleted within a defined timeframe, with certification of deletion available.

- Do you have SOC2 certification or equivalent? SOC2 Type II is the gold standard for SaaS security. If they don’t have it, ask about their timeline and what interim controls are in place.

- Can I set enforceable boundaries on what the AI can do? You need to restrict topics, data access, and response types—not just suggest guidelines.

Any vendor that can’t answer these clearly isn’t ready for regulated businesses.

FAQ

Q: Can I use ChatGPT for regulated client work?

A: Not safely. ChatGPT doesn’t provide per-client data isolation, exportable audit trails, or enforceable boundaries. Data entered into ChatGPT may be used for model training. For regulated businesses, purpose-built compliant platforms like LaunchLemonade are the responsible choice.

Q: What’s the difference between “secure” and “compliant” AI?

A: Security is about protecting data from unauthorized access. Compliance is broader—it includes security plus documentation, governance, audit readiness, and adherence to industry-specific regulations. A tool can be secure but not compliant if it lacks audit trails and governance controls.

Q: How much more does compliant AI cost?

A: Compliant AI platforms typically cost $25-75/month versus free-to-$20 for consumer tools. But the real comparison is platform price versus the cost of a compliance violation, which can run $10,000 to $1,000,000+ depending on severity and industry.

Q: Do I need compliant AI if I’m a small firm?

A: If you handle client data in a regulated industry, yes—regardless of firm size. Regulators don’t scale their requirements based on your headcount. A solo financial advisor has the same record-keeping obligations as a 500-person firm.

Q: How long does it take to implement compliant AI?

A: On LaunchLemonade, you can have your first compliant AI agent running in under an hour. No coding required—you upload your knowledge base, set the boundaries, and deploy. The governance features are built into the platform, not bolted on after the fact.

Build compliant AI agents for your regulated business at launchlemonade.app