Financial advisors can use AI for Financial Advisors to improve efficiency without breaking compliance rules. However, they must choose platforms with built-in governance. Advisors need audit trails, strict data isolation, role-based access, and a clear compliance record. Therefore, this guide explains what is allowed, what to avoid, and how to start safely with governed tools like launchlemonade.

Can Advisors Use AI Tools for Financial Advisors Without Breaking Compliance?

Yes, advisors can use AI tools in their practice without violating SEC, FINRA, or state regulations. However, they must prove control and oversight. Regulators care about accountability, not the technology itself. Therefore, you must document how the system accesses data, generates outputs, and supports decisions.

When you use a governed system such as launchlemonade, you gain structured oversight. As a result, you can demonstrate compliance instead of reacting to questions later.

Safe Workflows When Using AI Tools for Financial Advisors

The safest approach involves preparation support while the advisor makes final decisions. In other words, the assistant handles drafts and summaries, and you apply professional judgment.

1. Client Meeting Preparation with AI Tools for Financial Advisors

The assistant reviews portfolio notes, prior communications, and market updates. Then it generates a structured meeting brief. Consequently, you save 30 to 45 minutes per meeting. You enter the meeting prepared and focused. Most importantly, you control the advice delivered.

2. Client Communication Drafts

The system drafts review emails, quarterly letters, and follow-ups based on your templates. However, you review and approve each message before sending it. This human review step keeps you compliant. The AI writes the draft, and you make the final decision.

3. Research and Operational Support

You can also request summaries of market reports, prospectuses, or regulatory updates. Instead of reading dozens of pages, you review concise briefs. Additionally, you can automate scheduling, document organization, and internal notes. Since these tasks avoid direct investment advice, they carry lower compliance risk.

What to Avoid When Implementing AI for Financial Advisors

Although AI increases efficiency, advisors must not delegate fiduciary responsibility. Therefore, avoid using any system to generate personalized investment recommendations. You must also avoid automated suitability determinations and unreviewed compliance filings.

Furthermore, never send client-facing advice without licensed review. The pattern remains clear. The system prepares information, and you make decisions. This structure protects both your clients and your firm.

Governance Requirements for AI Tools in Financial Advisory Firms

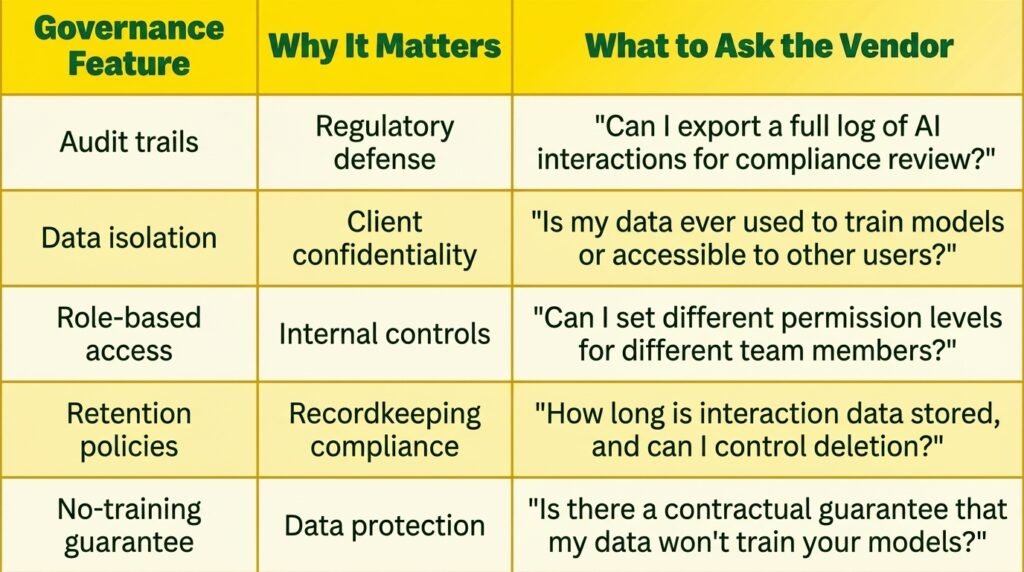

Before adopting any system, confirm that it includes five core governance features. Without them, compliance risk increases.

1. Audit Trails

The platform must log every interaction. You should see who asked a question, what data the system accessed, and what output it produced. If a regulator asks for documentation, you must provide clear records immediately.

2. Data Isolation

Your client data must remain in your controlled environment. It must not train external models. Therefore, demand written confirmation of data isolation before onboarding any vendor.

3. Role-Based Access and No-Training Policies

Not every team member needs equal access. Junior staff can use automation for operations, while licensed advisors handle communication drafts. In addition, the provider must guarantee that it does not use your data for model training. launchlemonade includes these controls by default, which strengthens your compliance position.

How to Start Using AI Tools for Financial Advisors Safely

Adopt a phased rollout instead of changing everything at once. First, select a governed platform such as launchlemonade. Next, begin with one low-risk workflow.

1. Weeks 1–2: Meeting Prep

Upload your templates and checklists. Then test the assistant on several client meetings. Review every output carefully. As you evaluate quality, document time saved.

2. Weeks 3–4: Communication Drafts

Add email templates and tone guidance. Generate drafts, review them thoroughly, and send only approved messages. Track efficiency gains.

3. Month 2 and Beyond

Expand into research summaries and operational automation. At this stage, involve your compliance officer in documentation review. Because you started small, scaling becomes controlled and predictable.

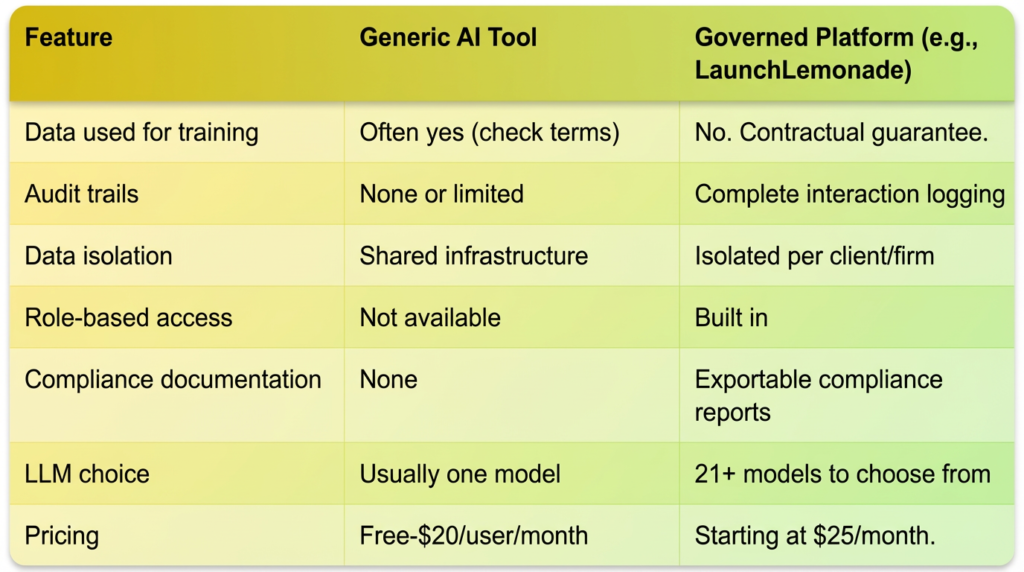

Governed Platforms vs Generic AI Tools

Generic AI tools target broad consumer use. Therefore, they often lack governance controls, audit exports, and contractual data guarantees. In contrast, launchlemonade builds compliance features into its core architecture.

The cost difference remains modest. However, the risk difference remains significant. Regulatory fines can reach hundreds of thousands of dollars. Consequently, paying a predictable monthly fee protects your practice. launchlemonade offers plans starting at $25 per month and $20 per seat for teams, which includes governance controls and multiple LLM options.

If you want structured oversight, book a demo and evaluate how launchlemonade fits your compliance framework. Taking action now positions your firm ahead of regulatory tightening.

Frequently Asked Questions About AI Tools for Financial Advisors

1. What if a regulator questions my AI usage?

You must provide documented evidence of usage, outputs, and review steps. Governed systems export audit logs, which creates a clear compliance trail.

2. Can I use consumer AI tools in my advisory practice?

You may use them for general research without client data. However, avoid entering sensitive information into tools that lack governance guarantees.

3. What is the first workflow to implement?

Start with meeting preparation. It delivers immediate value and keeps client-facing risk low.

Advisors who adopt structured AI systems today strengthen efficiency while maintaining regulatory control. With the right governance, AI becomes a strategic advantage rather than a compliance threat.