Financial advisors can use AI assistants for client communication drafts, meeting prep, research summaries, and operational tasks without violating compliance requirements, but only on platforms with built-in governance. The key requirements: audit trails for every interaction, data isolation (your client data never trains the model), role-based access controls, and a clear compliance paper trail. Governed platforms like LaunchLemonade are built specifically for businesses that can’t afford to get it wrong. This guide covers what’s allowed, what’s risky, and how to start safely.

Can Financial Advisors Use AI Without Breaking Compliance?

Yes. Financial advisors can use AI assistants for a wide range of tasks without violating SEC, FINRA, or state regulatory requirements. The key is choosing a platform with governance built in, not bolted on as an afterthought.

What regulators care about isn’t whether you use AI. It’s whether you can demonstrate control, oversight, and accountability over how AI interacts with client data and produces output that clients rely on.

What AI Tasks Are Safe for Financial Advisors?

The safest AI use cases for financial advisors are tasks where the assistant handles preparation and the advisor handles judgment. Here are the specific workflows that stay well within compliance boundaries:

Client Meeting Preparation

Your assistant reviews a client’s portfolio notes, recent communications, and market conditions, then generates a structured meeting prep document. This saves 30-45 minutes per client meeting. You walk in with a brief instead of spending the first 10 minutes of the meeting remembering where you left off.

Client Communication Drafts

The assistant drafts review emails, quarterly update letters, and follow-up notes based on your templates and tone. You review and approve every message before it’s sent. This is critical: the AI writes, you send. That human-in-the-loop keeps you compliant.

Research Summaries

Ask your assistant to summarize market reports, fund prospectuses, or regulatory updates into plain-language briefs. Instead of reading a 47-page report, you review a 2-page summary with the key points highlighted.

Operational Task Handling

Appointment scheduling, document organization, invoice management, and internal note formatting. These tasks don’t touch client-facing advice and carry minimal compliance risk.

Client FAQ Responses

Build a knowledge base of your firm’s standard responses to common questions (fee structures, onboarding steps, account access). The assistant handles routine inquiries while flagging anything that requires personalized advice for your attention.

What Should Financial Advisors NOT Use AI For?

Financial advisors should not use AI to generate specific investment recommendations, produce client-facing compliance documents without review, or make suitability determinations. These tasks require human judgment, fiduciary responsibility, and regulatory accountability that cannot be delegated to an AI assistant.

Specific no-go areas:

- Personalized investment advice. An AI assistant should never tell a client what to buy, sell, or hold. That’s your fiduciary obligation, not a task to delegate.

- Compliance filings. Form ADV updates, regulatory filings, and disclosure documents require human review and professional sign-off.

- Suitability assessments. Determining whether a product is appropriate for a specific client involves judgment that regulators hold you accountable for.

- Unreviewed client communications. Any message sent to a client should be reviewed by a licensed advisor before it goes out.

The pattern is clear: AI handles the preparation. You handle the decisions. AI drafts the email. You review and click send. AI summarizes the research. You decide what it means for the client.

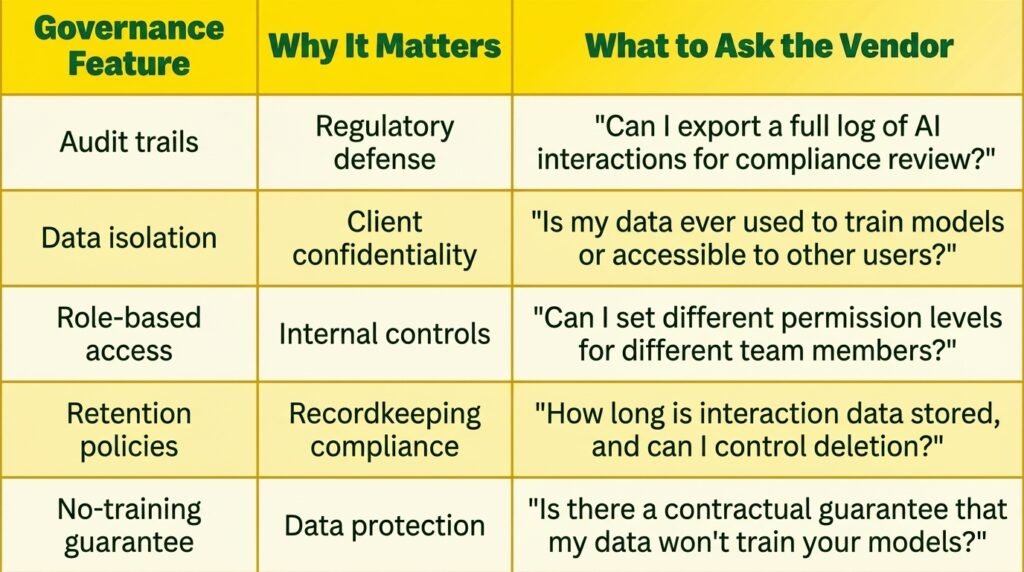

What Governance Features Should Financial Advisors Require?

Financial advisors should require five specific governance features before deploying any AI tool that touches client data: audit trails, data isolation, role-based access, retention policies, and a no-training guarantee.

1. Complete Audit Trails

Every interaction between the AI and client data should be logged. Who asked what, when they asked it, and what the AI responded. If a regulator asks “show me how AI was used in this client’s account,” you need a clear answer, not a shrug.

2. Data Isolation

Your client data should never leave your instance. It should never be used to train the underlying AI model. It should never be accessible to other users of the platform. This is non-negotiable. If a platform can’t guarantee data isolation in writing, don’t use it.

3. Role-Based Access Controls

Not everyone in your firm should have the same level of AI access. Junior staff might use the assistant for scheduling and document formatting. Only licensed advisors should use it for client communication drafts. Your platform should support these distinctions.

4. No-Training Guarantee

The platform must guarantee, in its terms of service, that your data is not used to train or improve AI models. This is the line that separates governed platforms from consumer tools. ChatGPT’s free tier trains on your inputs. A governed platform like LaunchLemonade does not.

How Do Financial Advisors Get Started With AI Safely?

Start with one low-risk workflow, prove it works within your compliance framework, then expand. Don’t try to transform your entire practice in a week. The advisors who succeed with AI are the ones who start small and build confidence.

Week 1-2: Set up meeting prep.

Choose a governed platform like LaunchLemonade. Upload your client meeting template and standard preparation checklist. Use the assistant to prepare for 3-5 client meetings. Review every output carefully. Note what works and what needs adjustment.

Week 3-4: Add client communication drafts.

Upload your email templates, quarterly letter format, and tone guidelines. Have the assistant draft communications for 5-10 clients. Review every draft before sending. Track time saved versus manual drafting.

Week 5-6: Expand to research summaries.

Start feeding the assistant market reports and fund documents. Ask for plain-language summaries. Compare the summaries against your own reading to calibrate quality.

Month 2+: Scale what works.

By this point, you’ll know which workflows save the most time and which ones your compliance officer is comfortable with. Expand into operational automation, client FAQ handling, and more complex multi-step workflows.

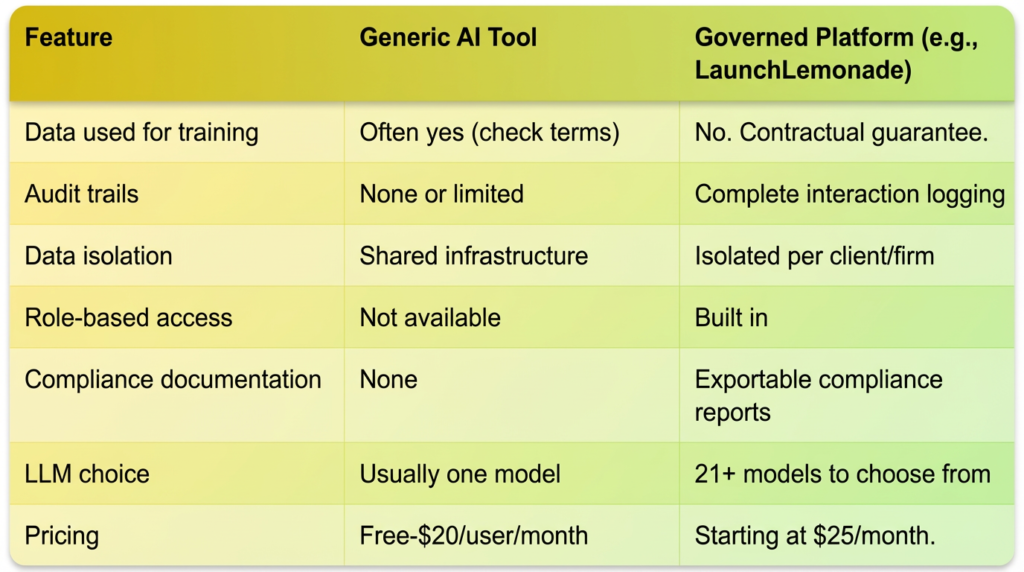

How Does a Governed AI Platform Differ from Generic AI Tools?

A governed AI platform is built from the ground up for businesses that handle sensitive data, with compliance features as core architecture rather than optional add-ons. Generic AI tools (ChatGPT, Gemini, generic chatbot builders) are designed for broad consumer use and lack the governance controls that regulated businesses need.

The price difference is minimal. The risk difference is enormous. A compliance violation can cost a financial advisory firm $50,000-$500,000 in fines plus reputational damage. Spending $20-25/month on a governed platform is insurance, not an expense.

This means financial advisors who adopt governed AI platforms now are ahead of the curve. When regulations tighten (and they will), you’ll already have the audit trails, data controls, and compliance documentation in place. The firms that adopted ungoverned tools will scramble to either migrate or create compliance frameworks retroactively.

LaunchLemonade is built for this future. Every AI assistant created on the platform comes with governance features by default: audit trails for every interaction, data controls that keep client information isolated, and the ability to export compliance documentation on demand. It’s AI built for businesses that can’t afford to get it wrong.

Frequently Asked Questions

What happens if a regulator asks about my AI use?

You need to be able to show what the AI was used for, what data it accessed, what outputs it produced, and who reviewed those outputs. Governed platforms like LaunchLemonade provide exportable audit logs that document every interaction, giving you a clear paper trail for regulatory inquiries.

Can I use ChatGPT for my financial advisory practice?

Using consumer AI tools like ChatGPT for tasks involving client data carries significant compliance risk. These tools may use your inputs for model training, lack audit trails, and don’t provide the data isolation or governance controls that regulated businesses need. For operational tasks that don’t involve client data (like general research), the risk is lower. For anything touching client information, use a governed platform.

How much does a compliance-ready AI platform cost?

Governed AI platforms for financial advisors typically cost between $20-150/month depending on features and team size. LaunchLemonade plans start at $25/month for personal use and $20/seat/month for teams, including governance features, 21+ LLM choices, and knowledge base capabilities.

What’s the first AI workflow a financial advisor should set up?

Start with client meeting preparation. It’s low-risk (no client-facing output), immediately valuable (saves 30-45 minutes per meeting), and gives you a chance to evaluate the platform’s quality before expanding to more sensitive workflows like client communication drafts.

Ready to use AI in your practice without compromising compliance? Try LaunchLemonade’s governed platform