For regulated professional services

Your back office,on autopilot.

Meetings, emails, research, reporting — handled by AI agents built for finance firms. Governed by default. No engineering team required.

The problem

In professional services — from financial advisors to consultants — teams spend 41% of their workweek on back-office and admin instead of clients. Your tools don't talk to each other. Your team does work that should take minutes, not hours.

Source: Kitces Research — How Financial Advisors Actually Spend Their Time

Free 30-second audit

The average advisor loses 22 hours a week to admin.

That's 41% of the workweek, according to Kitces Research. Three taps and you'll see exactly how much your firm is losing, where it's going, and what it's costing you per year. No email required.

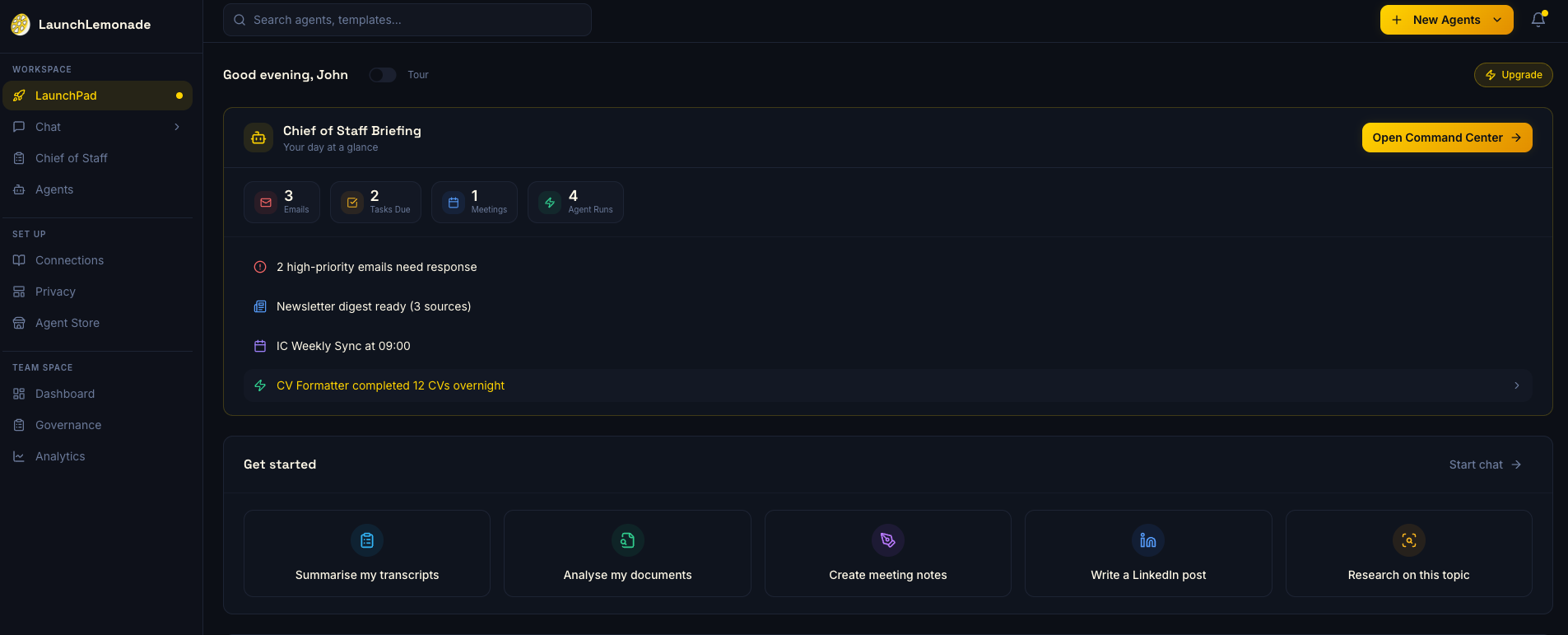

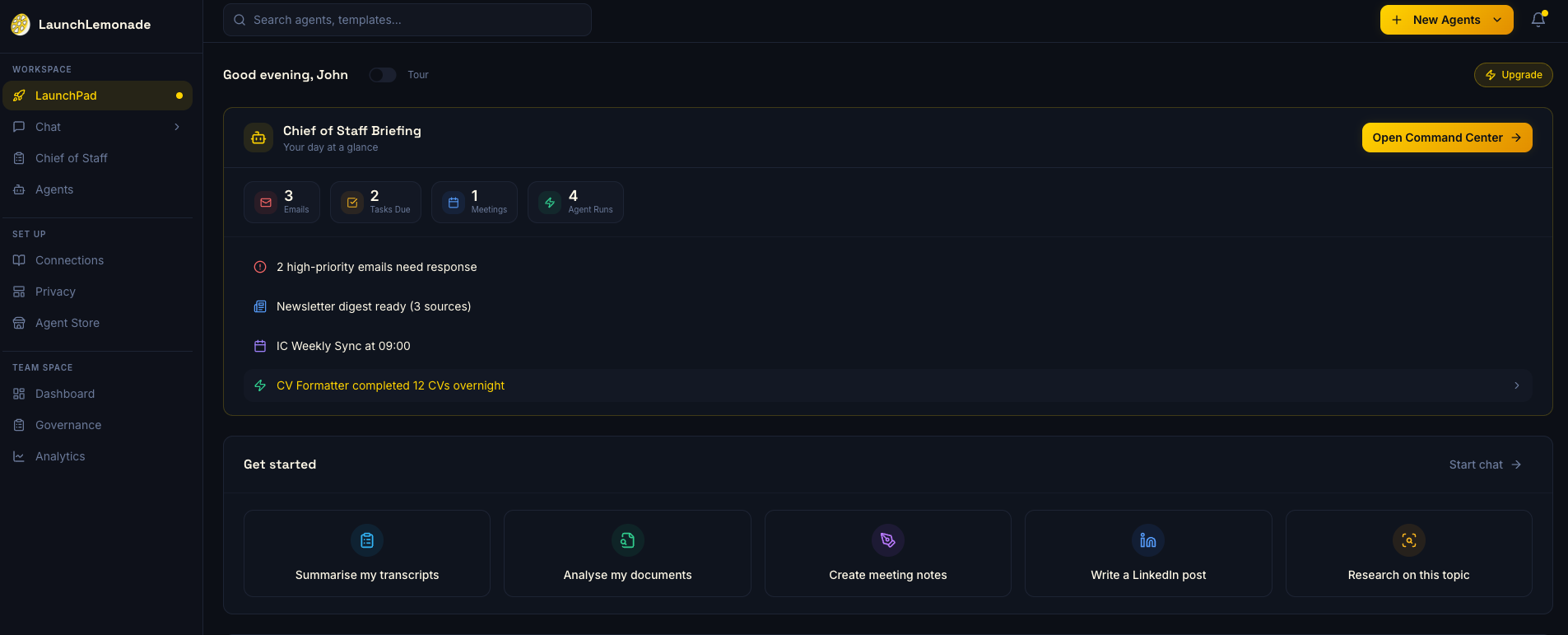

A day at your firm

Your Monday morning.

Built for your industry

Built for how your firm actually works.

Not another generic AI tool. Agents, workflows, and knowledge bases designed for your industry.

Your team does audit prep at midnight. It doesn't have to.

- Meeting notes from every client call, auto-tagged by engagementBuilt-in

- Task and project management toolsBuilt-in

- Client onboarding that collects documents and flags what's missingCustomized

- Compliance checks run before the partner even reviewsCustomized

- Tax research agents that pull from your firm's prior-year workpapersCustomized

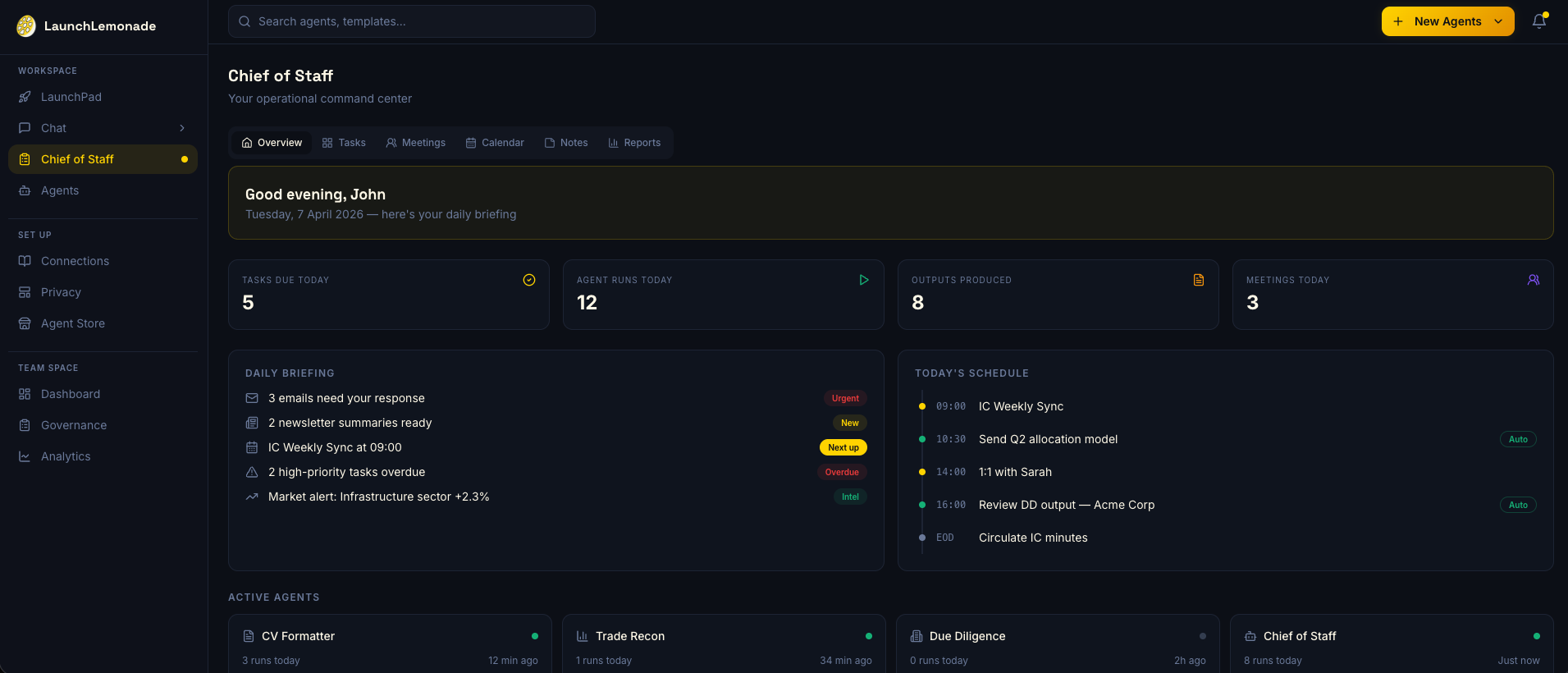

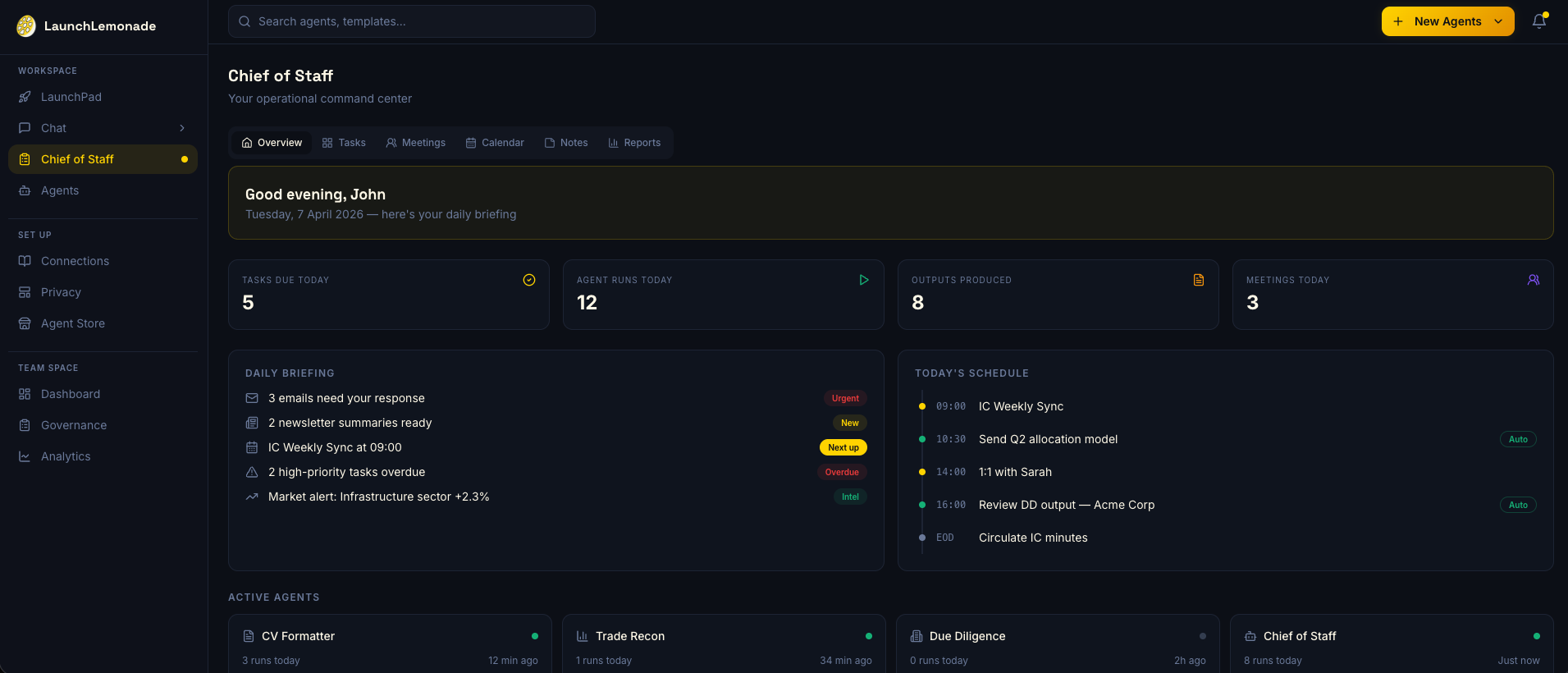

The platform

What your Chief of Staff handles.

Three core capabilities, ready out of the box. There's a lot more inside the platform.

Meetings → Intelligence

Every client call produces notes, action items, and follow-up emails. Automatically. No more “can someone send the recap?”

Email → Managed

Your inbox is triaged by AI that knows which clients matter, which emails need you, and which ones don’t. Calendar conflicts resolved before you see them.

Knowledge → Searchable

Ask a question. Get an answer from every meeting, email, and document your firm has ever touched. Your firm’s institutional knowledge, finally accessible.

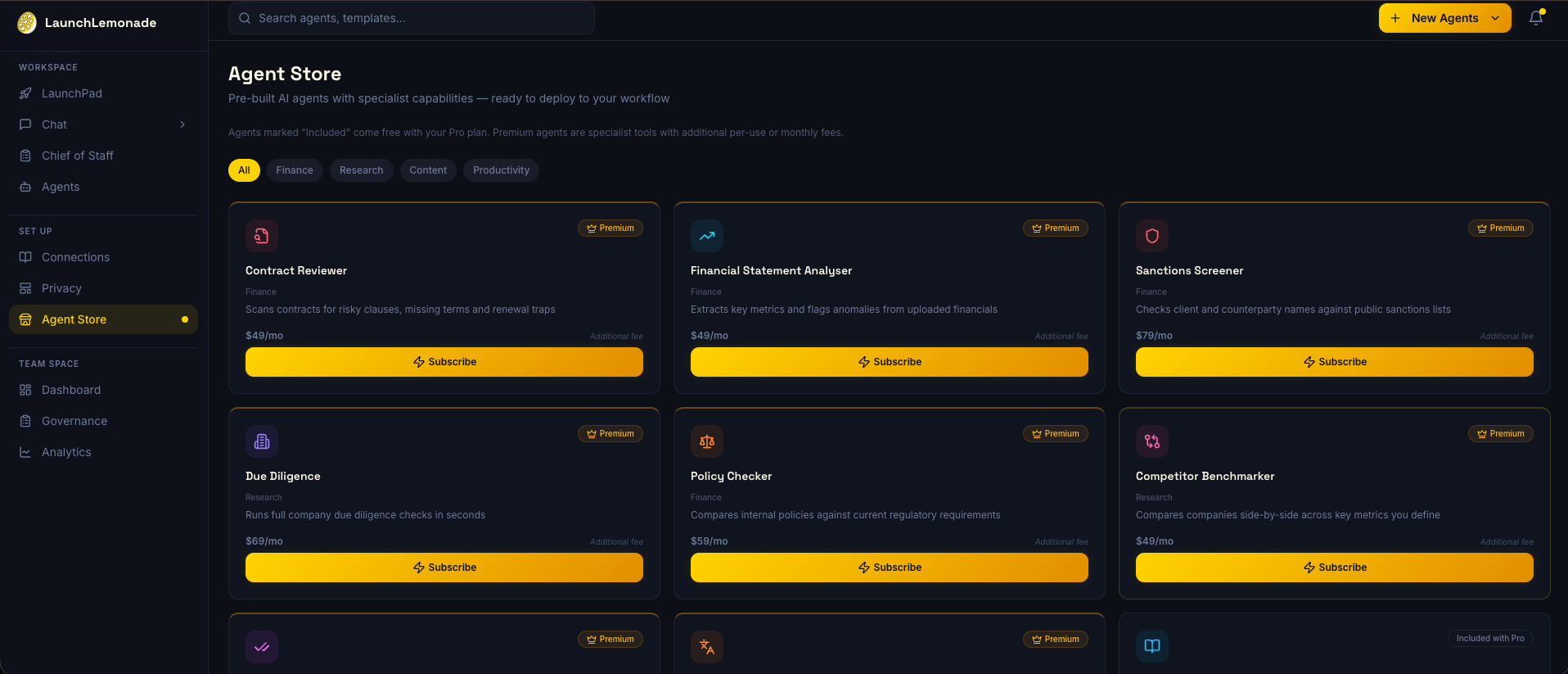

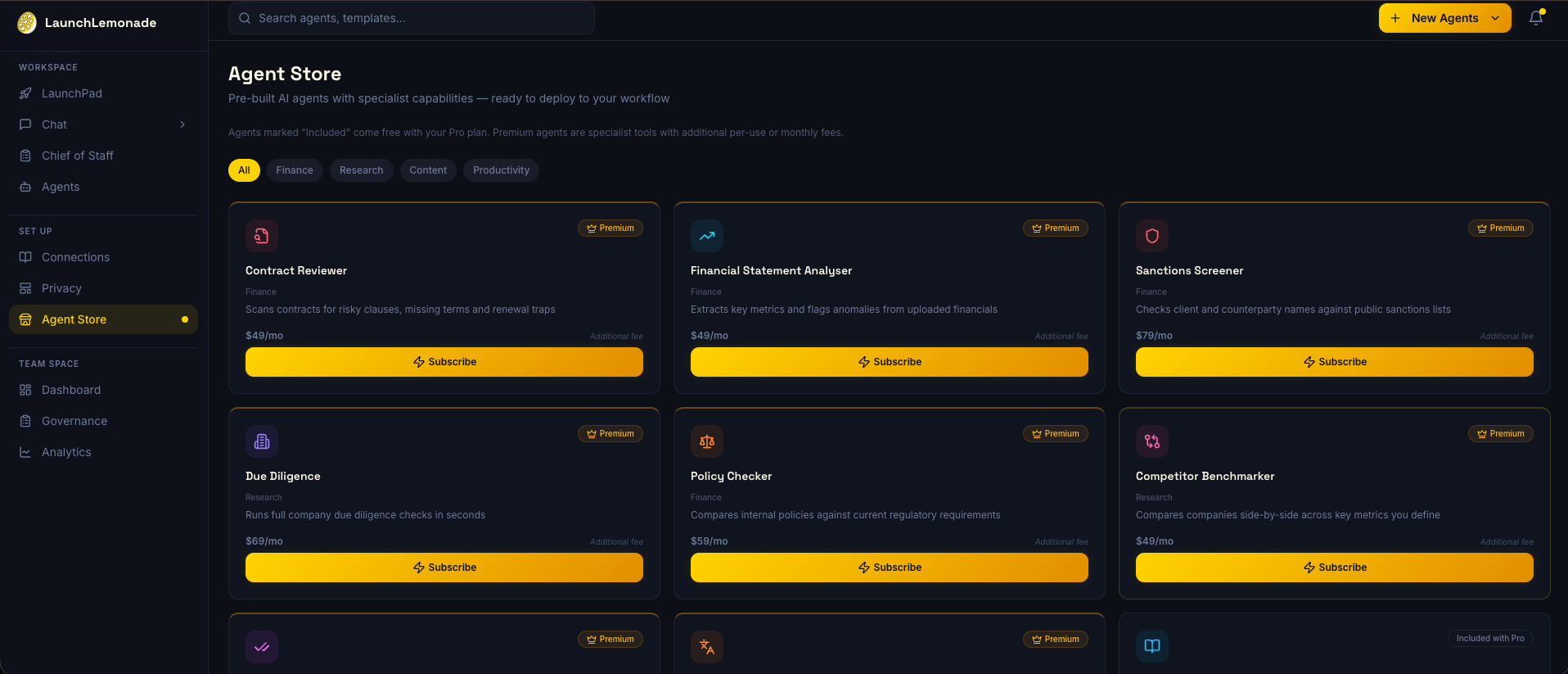

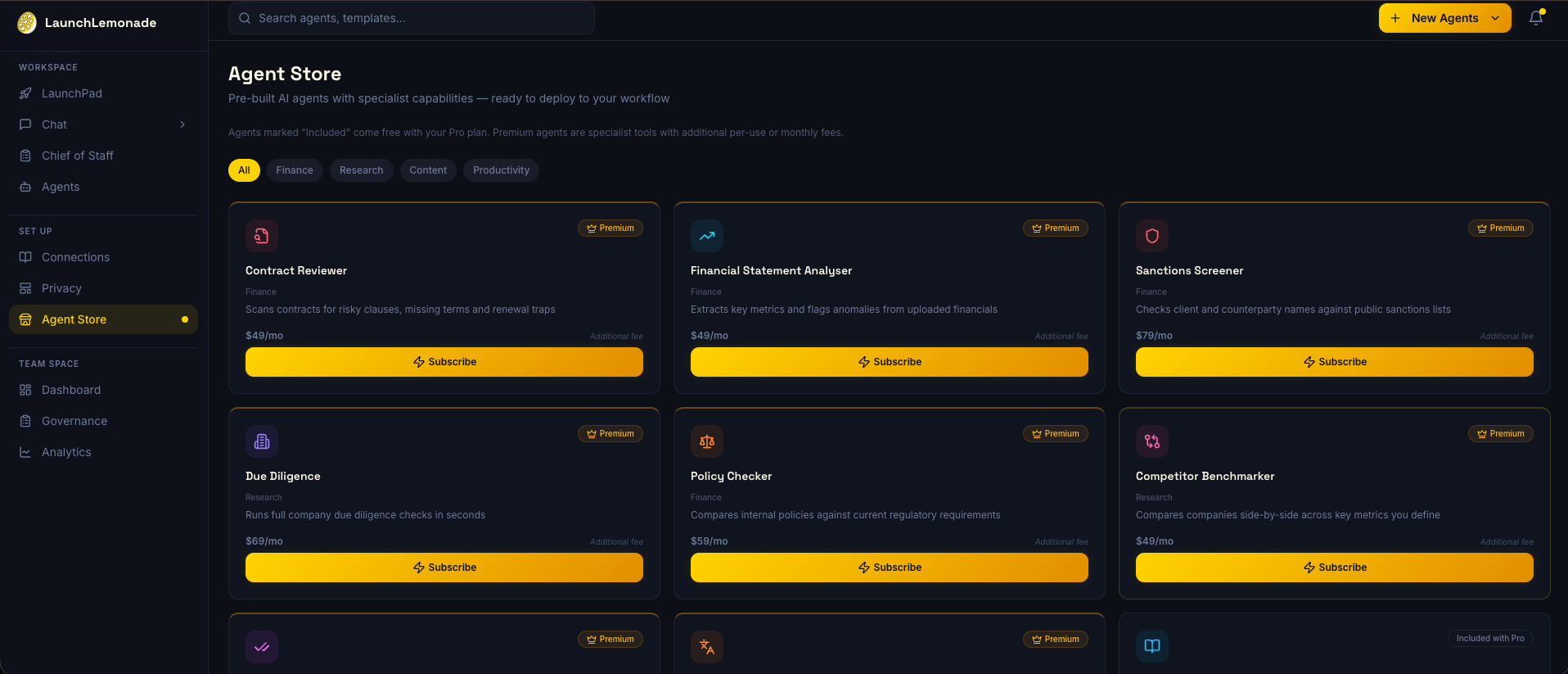

Agent Store

Pre-built agents. One-click install.

AI agents that handle the work your team shouldn't be doing. Due diligence, compliance, client onboarding, audit prep.

Powered by 50+ model variants

Including GPT, Claude, Gemini, and open-source models. Text, audio, documents, presentations, and spreadsheets.

Trust & compliance

Trust, earned not claimed.

Regulated finance firms deserve straight answers on AI governance. Here's exactly where we stand.

On the roadmap

SOC 2 Type I

Targeted for Q2 2026. Continuous monitoring is live and our policy suite is in place. We'll share the report the moment it lands.

On the roadmap

EU AI Act readiness

Preparing for the 2026 deadlines. Not there yet. We'll tell you exactly what we support before you sign anything.

Built in today

Governance by default

Audit logs, role-based access, and data retention controls on every agent. Not a setting you enable — the way the platform works.

Services

Want us to set it up for your firm?

We also build custom agents, train teams, and white-label the platform for firms who want to offer AI under their own brand.

AI Consulting

We figure out where AI fits in your firm, then we build it. Strategy, custom agents, private infrastructure, and integration into your existing tools.

Learn moreAI Training

Your team learns to automate deliverables, build AI agents, and use AI safely with client data. They ship their first agent before the day ends.

Learn moreWhite Label

Offer AI agents to your clients under your own brand. We run the infrastructure.

Learn moreGet started

Ready to stop wasting hours?

Start using the platform, or book a call to see how it fits your firm.